Welcome to SwedenCpp

Latest blogs, videos, podcasts and releases in one stream

Wednesday, June 10, 2026

Linux as the Conductor - Driving Pre-Compiled Audio DSP Kernels on C7x for Real-Time Processing🎥audiodevcon

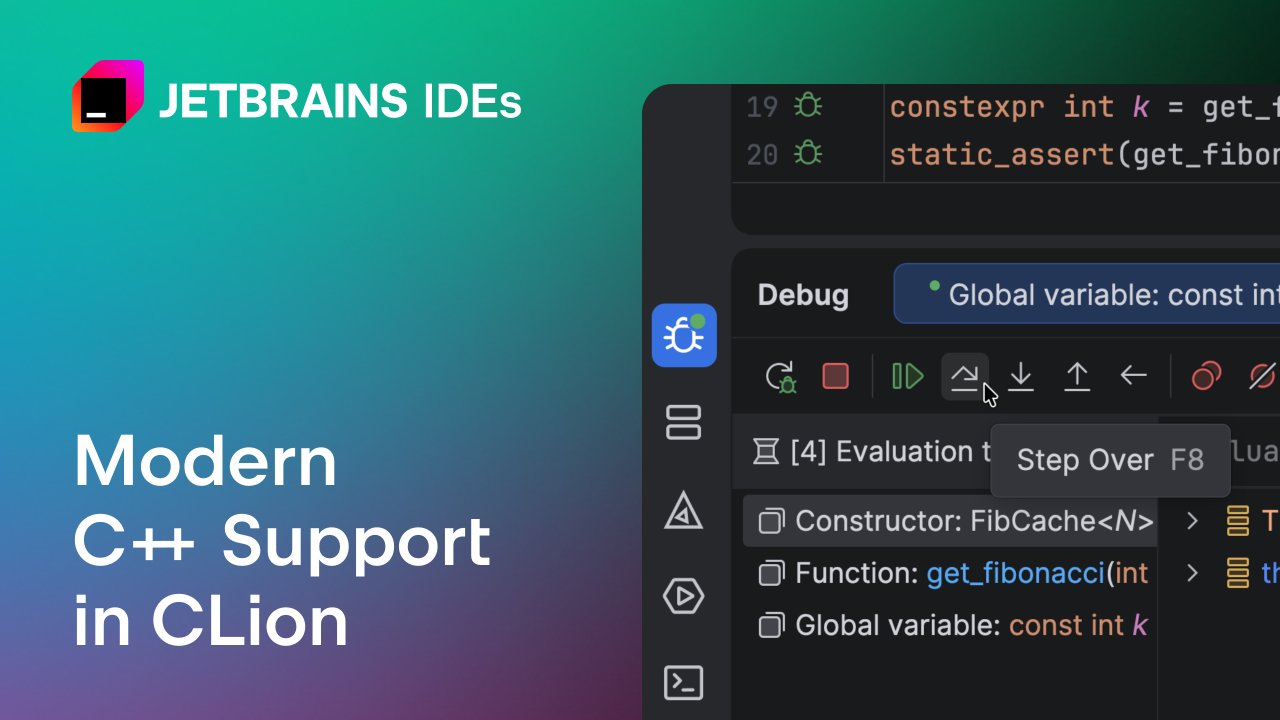

Linux as the Conductor - Driving Pre-Compiled Audio DSP Kernels on C7x for Real-Time Processing🎥audiodevcon Modern C++ Support in CLion: What’s NewModern C++ makes advanced high-performance techniques more accessible, with features like compile-time computation, zero-overhead abstractions, and expressive template code. But as your codebase grows, your ability to use these techniques productively depends heavily on how well your tooling understands them. Without proper language engine support, modern C++ features can lead to false positives, broken navigation, […]📝CLion : A Cross-Platform IDE for C and C++ | The JetBrains Blog

Modern C++ Support in CLion: What’s NewModern C++ makes advanced high-performance techniques more accessible, with features like compile-time computation, zero-overhead abstractions, and expressive template code. But as your codebase grows, your ability to use these techniques productively depends heavily on how well your tooling understands them. Without proper language engine support, modern C++ features can lead to false positives, broken navigation, […]📝CLion : A Cross-Platform IDE for C and C++ | The JetBrains Blog cppreference as your go to guide [Learn C++ Shorts Lesson 3]🎥Mike Shah

cppreference as your go to guide [Learn C++ Shorts Lesson 3]🎥Mike Shah class properties and typeinfo - classes part 9 of N [D Language - Dlang Episode 147]🎥Mike Shah

class properties and typeinfo - classes part 9 of N [D Language - Dlang Episode 147]🎥Mike ShahIf this page is useful, please consider donating a coffee

Tuesday, June 9, 2026

C++26: Cleaning up string literalsThe two papers we are covering today are complementary in a philosophical sense. They both improve how string literals are handled in C++26. P2361R6 tackles strings that are never evaluated — the ones that only exist at compile time. P1854R4 tackles evaluated string literals, making non-encodable characters ill-formed instead of implementation-defined. Let’s start with the unevaluated side. ...📝Sandor Dargo's Blog

C++26: Cleaning up string literalsThe two papers we are covering today are complementary in a philosophical sense. They both improve how string literals are handled in C++26. P2361R6 tackles strings that are never evaluated — the ones that only exist at compile time. P1854R4 tackles evaluated string literals, making non-encodable characters ill-formed instead of implementation-defined. Let’s start with the unevaluated side. ...📝Sandor Dargo's Blog SovereignThe keyword in politics these days is ‘sovereign’. What few will admit is that it is effectively the adoption of the American strategy: Make America Great Again. In other words, reindustrialization of key sectors of the economy. The UK used to be a computing champion. Our chip designs (ARM) originated from the UK. Canada had … Continue reading Sovereign📝Daniel Lemire's blog

SovereignThe keyword in politics these days is ‘sovereign’. What few will admit is that it is effectively the adoption of the American strategy: Make America Great Again. In other words, reindustrialization of key sectors of the economy. The UK used to be a computing champion. Our chip designs (ARM) originated from the UK. Canada had … Continue reading Sovereign📝Daniel Lemire's blog The Microsoft Company Party where everybody played name tag swapEven the boss got into the festivities. The post The Microsoft Company Party where everybody played name tag swap appeared first on The Old New Thing .📝The Old New Thing

The Microsoft Company Party where everybody played name tag swapEven the boss got into the festivities. The post The Microsoft Company Party where everybody played name tag swap appeared first on The Old New Thing .📝The Old New Thing Why I Fell for C++ — Ruben Perez Hidalgo, author of Boost.MySQL🎥C++ Alliance

Why I Fell for C++ — Ruben Perez Hidalgo, author of Boost.MySQL🎥C++ AllianceMonday, June 8, 2026

Monads Meet Mutexes - Arne Berger - C++Online 2026🎥CppOnline

Monads Meet Mutexes - Arne Berger - C++Online 2026🎥CppOnline Rotation revisited: Shuffling more than three blocks, and other small notesGeneralizing the shuffle to arbitrary numbers of blocks. The post Rotation revisited: Shuffling more than three blocks, and other small notes appeared first on The Old New Thing .📝The Old New Thing

Rotation revisited: Shuffling more than three blocks, and other small notesGeneralizing the shuffle to arbitrary numbers of blocks. The post Rotation revisited: Shuffling more than three blocks, and other small notes appeared first on The Old New Thing .📝The Old New Thing C++ Weekly - Ep 536 - Devirtualization and performance with final🎥Jason Turner

C++ Weekly - Ep 536 - Devirtualization and performance with final🎥Jason Turner On deducing this in C++23 and ref qualifiers🎥MeetingCpp

On deducing this in C++23 and ref qualifiers🎥MeetingCpp Faking keyword arguments to functions in C++One of the many nice language features in Python are keyword arguments. They make some types of APIs concise and readable. Like so: Unfortunately C does not have keyword arguments and, by extension, neither does C++. Adding them as a language feature would take 15-20 years of effort, most of which would consist of trying to convince people via email that such a feature is important and should be added. There have been attempts to implement this via macros and template magic ( link ), but they have not seen widespread usage probably because they are using macros and template magic. However it turns out that with modern language features you can fake keyword arguments fairly convincingly. Like so: The add_argument method takes a single argument which is a struct. The extra curly braces inside the parentheses boil down to "whatever the underlying argument is, construct it in place with these parameters". The dotted names are designated initializers, so those fields get the specified value whereas other fields get their default values. And there you go, keyword arguments in C++. You just have to squint a bit and pretend not to see the extra curly braces.📝Nibble Stew

Faking keyword arguments to functions in C++One of the many nice language features in Python are keyword arguments. They make some types of APIs concise and readable. Like so: Unfortunately C does not have keyword arguments and, by extension, neither does C++. Adding them as a language feature would take 15-20 years of effort, most of which would consist of trying to convince people via email that such a feature is important and should be added. There have been attempts to implement this via macros and template magic ( link ), but they have not seen widespread usage probably because they are using macros and template magic. However it turns out that with modern language features you can fake keyword arguments fairly convincingly. Like so: The add_argument method takes a single argument which is a struct. The extra curly braces inside the parentheses boil down to "whatever the underlying argument is, construct it in place with these parameters". The dotted names are designated initializers, so those fields get the specified value whereas other fields get their default values. And there you go, keyword arguments in C++. You just have to squint a bit and pretend not to see the extra curly braces.📝Nibble Stew Low Latency Android Audio with improved CPU Performance - Phil Burk - ADC 2025🎥audiodevcon

Low Latency Android Audio with improved CPU Performance - Phil Burk - ADC 2025🎥audiodevcon Hello World even more modern in C++ [Learn C++ Shorts Lesson 2]🎥Mike Shah

Hello World even more modern in C++ [Learn C++ Shorts Lesson 2]🎥Mike ShahSunday, June 7, 2026

Tobias Hieta: A Brief Overview of the LLVM Architecture🎥SwedenCpp

Tobias Hieta: A Brief Overview of the LLVM Architecture🎥SwedenCpp Git is not the only option🎥Tsoding

Git is not the only option🎥Tsoding Developing for Avid’s Audio Ecosystem - Rob Majors - ADCx India 2026🎥audiodevcon

Developing for Avid’s Audio Ecosystem - Rob Majors - ADCx India 2026🎥audiodevcon C++ 20 Modules Brief Introduction | Modern Cpp Series Ep. 248🎥Mike Shah

C++ 20 Modules Brief Introduction | Modern Cpp Series Ep. 248🎥Mike Shah C++26 Reflection gives us universal template parametersKeenan Horrigan on the std-proposals mailing list pointed out an interesting consequence of C++26 Reflection: it seems to give us “universal template parameters” almost for free.📝Arthur O’Dwyer

C++26 Reflection gives us universal template parametersKeenan Horrigan on the std-proposals mailing list pointed out an interesting consequence of C++26 Reflection: it seems to give us “universal template parameters” almost for free.📝Arthur O’DwyerSaturday, June 6, 2026

How much do amd64 microarchitecture levels help in Go?Our 64-bit Intel and AMD processors have evolved over decades. When you compile a Go program for a 64-bit Intel or AMD processor, the compiler targets, by default, a nearly 20-year-old instruction set. The binary that comes out runs on essentially any x64 chip, but it also leaves on the table every instruction that was … Continue reading How much do amd64 microarchitecture levels help in Go?📝Daniel Lemire's blog

How much do amd64 microarchitecture levels help in Go?Our 64-bit Intel and AMD processors have evolved over decades. When you compile a Go program for a 64-bit Intel or AMD processor, the compiler targets, by default, a nearly 20-year-old instruction set. The binary that comes out runs on essentially any x64 chip, but it also leaves on the table every instruction that was … Continue reading How much do amd64 microarchitecture levels help in Go?📝Daniel Lemire's blog Проблема рекламации памяти и два новых решения в C++26. Лекция в университете iSpring.🎥Konstantin Vladimirov

Проблема рекламации памяти и два новых решения в C++26. Лекция в университете iSpring.🎥Konstantin VladimirovFriday, June 5, 2026

Purging Undefined Behavior and Intel Assumptions in a Legacy C++ Codebase🎥CppOnline

Purging Undefined Behavior and Intel Assumptions in a Legacy C++ Codebase🎥CppOnline The back cover of C++: The Programming Language also raises questions not answered by the front coverNot doing the reading. The post The back cover of C++: The Programming Language also raises questions not answered by the front cover appeared first on The Old New Thing .📝The Old New Thing

The back cover of C++: The Programming Language also raises questions not answered by the front coverNot doing the reading. The post The back cover of C++: The Programming Language also raises questions not answered by the front cover appeared first on The Old New Thing .📝The Old New Thing Rotation revisited: Avoiding having to calculate the gcd when doing cycle decompositionMath is hard. Let's go counting! The post Rotation revisited: Avoiding having to calculate the gcd when doing cycle decomposition appeared first on The Old New Thing .📝The Old New Thing

Rotation revisited: Avoiding having to calculate the gcd when doing cycle decompositionMath is hard. Let's go counting! The post Rotation revisited: Avoiding having to calculate the gcd when doing cycle decomposition appeared first on The Old New Thing .📝The Old New Thing Instrumenting the Stack: Strategies for End-to-end Sanitizer Adoption - Damien Buhl - CppCon 2025🎥CppCon

Instrumenting the Stack: Strategies for End-to-end Sanitizer Adoption - Damien Buhl - CppCon 2025🎥CppCon June's Overload Journal has been published.The June 2026 ACCU Overload journal has been published and should arrive at members' addresses in the next few days. Overload 193 and previous issues of Overload can be accessed via the Journals menu.📝ACCU

June's Overload Journal has been published.The June 2026 ACCU Overload journal has been published and should arrive at members' addresses in the next few days. Overload 193 and previous issues of Overload can be accessed via the Journals menu.📝ACCU Music Design and Systems - Achieving Inaudibly Complex Systems in Video Games - Liam Peacock - ADC🎥audiodevcon

Music Design and Systems - Achieving Inaudibly Complex Systems in Video Games - Liam Peacock - ADC🎥audiodevcon C++: The Documentary released todayC++: The Documentary premiered today on YouTube, and it was great to be on the live chat with Bjarne and many other key folks who participated in C++’s history. I’m honored to have been one of hundreds of people who have played a part in advancing Bjarne’s wonderful project over the years. If you haven’t … Continue reading C++: The Documentary released today →📝Sutter’s Mill

C++: The Documentary released todayC++: The Documentary premiered today on YouTube, and it was great to be on the live chat with Bjarne and many other key folks who participated in C++’s history. I’m honored to have been one of hundreds of people who have played a part in advancing Bjarne’s wonderful project over the years. If you haven’t … Continue reading C++: The Documentary released today →📝Sutter’s Mill Branchless sorting of trivially relocatable typesA few days ago Christof Kaser posted a very impressive blog post on “Fast Branchless Quicksort using Sorting-Networks” (chkas/blqsort). A “branchless” algorithm is one designed to exploit modern processors’ conditional-move instructions. So for example the blqs::sort2 primitive, which looks like this:📝Arthur O’Dwyer

Branchless sorting of trivially relocatable typesA few days ago Christof Kaser posted a very impressive blog post on “Fast Branchless Quicksort using Sorting-Networks” (chkas/blqsort). A “branchless” algorithm is one designed to exploit modern processors’ conditional-move instructions. So for example the blqs::sort2 primitive, which looks like this:📝Arthur O’DwyerThursday, June 4, 2026

What’s New in vcpkg (May 2026)This release includes major library updates for Boost 1.91, Qt 6.11, and OpenCASCADE 8.0, along with 27 new ports and over 500 port updates. The post What’s New in vcpkg (May 2026) appeared first on C++ Team Blog .📝C++ Team Blog

What’s New in vcpkg (May 2026)This release includes major library updates for Boost 1.91, Qt 6.11, and OpenCASCADE 8.0, along with 27 new ports and over 500 port updates. The post What’s New in vcpkg (May 2026) appeared first on C++ Team Blog .📝C++ Team Blog Polyhedron Processing Improvements in VTKPolyhedral cells (general convex or non-convex 3D cells with arbitrary face and vertex counts) appear throughout large-scale simulation, particularly in CFD. Some solvers produce them as the dual of a tetrahedral mesh; others use them as transition cells across refinement boundaries; still others build directly on face-based polyhedral connectivity as the native primitive. Several commercial and open source CFD codes have invested in face-based arbitrary polyhedral cells as a first-class primitive, and that investment runs all the way through the pre- and post-processing pipeline because handling polyhedra well at every stage is non-trivial.📝Kitware Inc

Polyhedron Processing Improvements in VTKPolyhedral cells (general convex or non-convex 3D cells with arbitrary face and vertex counts) appear throughout large-scale simulation, particularly in CFD. Some solvers produce them as the dual of a tetrahedral mesh; others use them as transition cells across refinement boundaries; still others build directly on face-based polyhedral connectivity as the native primitive. Several commercial and open source CFD codes have invested in face-based arbitrary polyhedral cells as a first-class primitive, and that investment runs all the way through the pre- and post-processing pipeline because handling polyhedra well at every stage is non-trivial.📝Kitware Inc How long does it take for an Item to become visible?Qt Quick doesn’t drop frames - but it can render them later than expected. This article presents a practical C++ technique to measure when a QQuickItem actually becomes visible, identify late-rendered components, and quantify delays in dropped frames using the Qt scene graph lifecycle.📝KDAB

How long does it take for an Item to become visible?Qt Quick doesn’t drop frames - but it can render them later than expected. This article presents a practical C++ technique to measure when a QQuickItem actually becomes visible, identify late-rendered components, and quantify delays in dropped frames using the Qt scene graph lifecycle.📝KDAB Rotation revisited: Cycle decomposition in clang’s libcxxRotating in the minimum number of steps by performing cycle decomposition. The post Rotation revisited: Cycle decomposition in clang’s libcxx appeared first on The Old New Thing .📝The Old New Thing

Rotation revisited: Cycle decomposition in clang’s libcxxRotating in the minimum number of steps by performing cycle decomposition. The post Rotation revisited: Cycle decomposition in clang’s libcxx appeared first on The Old New Thing .📝The Old New Thing Do concepts improve deducing this?📝Meeting C++ blog

Do concepts improve deducing this?📝Meeting C++ blog Choosing Values for Robust Tests@media only screen and (max-width: 600px) { .body { overflow-x: auto; } .post-content table, .post-content td { width: auto !important; white-space: nowrap; } } This article was adapted from a Google Tech on the Toilet (TotT) episode. You can download a printer-friendly version of this TotT episode and post it in your office. By Radion Khait A test passes. Great! But does it really mean your code is working as expected? Not necessarily.Sometimes the values you choose in your tests can create a false sense of security, especially when dealing with default values. Consider this snippet of a simple map class and its corresponding unit test: Implementation Test void MyMap::insert(int key, int value) { // Oops! The map entry is default-initialized, // the second parameter is not used. internal_map_[key]; } TEST(MyMapTest, Insert) { MyMap my_map; my_map.insert(1, 0); // This passes! EXPECT_EQ(my_map.get(1), 0); } The test passes, but the insert method is broken! It never actually stores the value. The test only passes because the default value for an integer in the map (0) happens to match the value used in the test. When choosing test values, consider the following: Test with non-default values. Explicitly test with values different from the type's default (e.g., non-zero numbers, non-empty strings, enum values other than the one at index 0). This provides greater confidence that your code is actually using the provided input. TEST(MyMapTest, Insert) { MyMap my_map; my_map.insert(1, 5); // This test would fail and reveal the bug in // the implementation above: “Expected 5, got 0”. EXPECT_EQ(my_map.get(1), 5); } Test multiple inputs that cover different scenarios, where it is reasonable to do so. Consider empty/missing/null values, numerical boundaries, and special cases that trigger complex logic. Try to cover all distinct code/logic paths. Consider using fuzzing to more thoroughly cover the input domain. Use different values for each input. This guarantees the code under test doesn't accidentally reuse a single input or switch their order. Parameterized testing can also help test a large variety of inputs with minimal code duplication. TEST(MyMapTest, Insert) { // Use a different value for `key` and `value`. my_map.insert( /*key=*/ 1, / *value=*/ 2); EXPECT_EQ(my_map.at(1), 2); }📝Google Testing Blog

Choosing Values for Robust Tests@media only screen and (max-width: 600px) { .body { overflow-x: auto; } .post-content table, .post-content td { width: auto !important; white-space: nowrap; } } This article was adapted from a Google Tech on the Toilet (TotT) episode. You can download a printer-friendly version of this TotT episode and post it in your office. By Radion Khait A test passes. Great! But does it really mean your code is working as expected? Not necessarily.Sometimes the values you choose in your tests can create a false sense of security, especially when dealing with default values. Consider this snippet of a simple map class and its corresponding unit test: Implementation Test void MyMap::insert(int key, int value) { // Oops! The map entry is default-initialized, // the second parameter is not used. internal_map_[key]; } TEST(MyMapTest, Insert) { MyMap my_map; my_map.insert(1, 0); // This passes! EXPECT_EQ(my_map.get(1), 0); } The test passes, but the insert method is broken! It never actually stores the value. The test only passes because the default value for an integer in the map (0) happens to match the value used in the test. When choosing test values, consider the following: Test with non-default values. Explicitly test with values different from the type's default (e.g., non-zero numbers, non-empty strings, enum values other than the one at index 0). This provides greater confidence that your code is actually using the provided input. TEST(MyMapTest, Insert) { MyMap my_map; my_map.insert(1, 5); // This test would fail and reveal the bug in // the implementation above: “Expected 5, got 0”. EXPECT_EQ(my_map.get(1), 5); } Test multiple inputs that cover different scenarios, where it is reasonable to do so. Consider empty/missing/null values, numerical boundaries, and special cases that trigger complex logic. Try to cover all distinct code/logic paths. Consider using fuzzing to more thoroughly cover the input domain. Use different values for each input. This guarantees the code under test doesn't accidentally reuse a single input or switch their order. Parameterized testing can also help test a large variety of inputs with minimal code duplication. TEST(MyMapTest, Insert) { // Use a different value for `key` and `value`. my_map.insert( /*key=*/ 1, / *value=*/ 2); EXPECT_EQ(my_map.at(1), 2); }📝Google Testing Blog Changes to ACCU's Terms and ConditionsACCU's committee is announcing that the organisation's Terms and Conditions for both paid and trial memberships have changed. Specifically, ACCU is no longer offering early access to conference videos. Visit the following links for the updated Terms and Conditions: Paid Membership Terms and Conditions Trial Membership Terms and Conditions📝ACCU

Changes to ACCU's Terms and ConditionsACCU's committee is announcing that the organisation's Terms and Conditions for both paid and trial memberships have changed. Specifically, ACCU is no longer offering early access to conference videos. Visit the following links for the updated Terms and Conditions: Paid Membership Terms and Conditions Trial Membership Terms and Conditions📝ACCU Final Classes - classes part 8 of N [D Language - Dlang Episode 146]🎥Mike Shah

Final Classes - classes part 8 of N [D Language - Dlang Episode 146]🎥Mike Shah Managed jobs in UnityIn Unity’s job system, you write jobs as structs like this: struct MyJob : IJob { public void Execute() { // your code here } } One of the restrictions of Unity’s job system is that these job structs must be entirely unmanaged, meaning that you many not put any...📝Sebastian Schöner

Managed jobs in UnityIn Unity’s job system, you write jobs as structs like this: struct MyJob : IJob { public void Execute() { // your code here } } One of the restrictions of Unity’s job system is that these job structs must be entirely unmanaged, meaning that you many not put any...📝Sebastian Schöner